Hello.

How was Diwali for you? I hope you had fun. I had a blast - I finally found that I could fit in ready-made fabindia kurtas. Imagine that. I still look like a beached whale but it’s better than looking like jabba the hutt.

Much has happened in the past month:

The middle-east seems to have gone back 50 years in terms of peace prospects.

India lost the WC final.

OpenAI drama.

Supreme court rapped the knuckles of lazy governors

Trump might be back.

Puteketeke is the bird of the century. The whole john oliver trolling an entire country and helped along by people world over is the most funny thing in a long, long while.

Now, on to our main programming for this edition - I do not normally have takes on the hottest, latest thing. Mostly because for one, the information is very frothy and more importantly, it takes time to figure out what is really going on and separate the facts from the PR spins.

But the whole OpenAI drama is too juicy to pass up.

To summarize very briefly:

On Friday, Sam Altman was fired by OpenAI’s board claiming he was “less than candid1”. Greg Brockman reigned as well.

Much drama ensued over twitter including cryptic tweets, heart emojis, employees giving boards ultimatums and commentators of every stripe spewing all kinds of “analysis” on every imaginable online outlet. One enterprising I bank even organized a zoom call on “what the extraordinary developments at OpenAI mean for the AI landscape going forward".

Over the weekend, rumors started flying around, thick and fast that the board was facing a revolt and was negotiating Sam’s return back to OpenAI. OpenAI in turn hired ex-twitch CEO Emmett Shear as the new CEO.

Finally, Monday AM, Satya Nadella announced via tweet that Altman and Brockman are joining Microsoft - “together with colleagues”. It is very likely that a lot of openAI’s IP and human capital is leaving with Sam Altman as well.

1. Motabhai pulls a fast one.

Let me present a story. For any and all purposes, this is a complete fabrication and completely made up.

Once upon a time, there was a visionary retailer. He saw that a certain very large and populous country’s retail landscape was a mess. So he started bringing the modern retail concept to this land. He was very successful and widely regarded as the father of an entire industry. However, time passed and the visionary retailer was unable to resist the inexorable pull of gravity. And so it came to pass that eventually the visionary retailer found himself in a debt trap. Now, there is a lot that happened in this story - there was a large online company that invested and contractual assurances about not selling the assets to a third party were made but it is immaterial for the point being made here.

A mogul, let’s call him motabhai saw an opportunity but he didn’t really want to pay for the retail assets. So here is what he did. He made an offer to acquire the visionary retailer. While that offer went into legal challenges, he slowly started acquiring leases to the stores of the visionary retailer. Eventually, Motabhai acquired all the leases to the best stores of the visionary retailer. As the visionary retailer defaulted on rent payments, he started taking over the stores and running them under his systems.

And so, one day, the debtors and investors of the visionary retailer woke up only to find that motabhai had pulled a very, very fast one on them. He had pulled his offer to acquire the retailer’s company. They still had all the debt and issues, but somehow all their cash generating assets had been taken away right under them. As far as sleights of hand go, David Copperfield and the likes of Penn and Teller have nothing on motabhai.

2. Satyabhai pulls a Motabhai

Let us go back a bit and see what all the drama at OpenAI means for Microsoft. It has been one of the largest investors for OpenAI and was among the first few to actually start baking in AI into its products. Microsoft already has a perpetual license to all of OpenAI’s IP (but not artificial general intelligence) - this includes source code but also (and this is really the crown jewels) model weights.

The company that stood to lose the most if OpenAI imploded was Microsoft. OpenAI’s biggest strength is its people. Did Microsoft have a hit-by-a-truck scenario? Probably not.

But as the events of the last 5 days have proved, Nadella has effectively bought out a $86 billion company for ZERO. Clean, legal, nothing OpenAI can do about it.

I mean, sure, you could argue that it actually cost Microsoft $10bn but that optionality is worth trillions if not more.

That said, now that we are here, let’s see why anything but a for-profit company is a terrible way to set up anything that needs private capital.

3. Breaking down the OpenAI non-profit model

From OpenAI’s website:

“OpenAI is a non-profit artificial intelligence research company. Our goal is to advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return. Since our research is free from financial obligations, we can better focus on a positive human impact. We believe AI should be an extension of individual human wills and, in the spirit of liberty, as broadly and evenly distributed as possible. The outcome of this venture is uncertain and the work is difficult, but we believe the goal and the structure are right. We hope this is what matters most to the best in the field.”

Now, I was a bit cynical when I read this stuff in 2016 or thereabouts. Mostly because of Elon. He has since proved me right about my estimation of him given the whole twitter situation. but the non-profit model seemed to make sense. A few people of the Hinton camp I have had the incredible luck of knowing are folks who really believed in the future of AI but did not sleep well at night knowing that it is a tech that will need stewardship. Surely they would all be in (and they were).

Now, I really believed that talk is cheap. That really this will work out to the benefit of YCombinator and Tesla above everyone else. I was pleasantly surprised by the fact that they turned the company into a legal non-profit. From the very first filing in 2016:

“OpenAI’s goal is to advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return. We think that artificial intelligence technology will help shape the 21st century, and we want to help the world build safe AI technology and ensure that AI’s benefits are as widely and evenly distributed as possible. We’re trying to build AI as part of a larger community, and we want to openly share our plans and capabilities along the way.”

Okay. So far so good. But then things started getting diluted.

Two years later the line about openly sharing plans and capabilities was gone. They just cut the last, unimportant line of the para. That’s OK. Plans change.

Fast forward three years to 2021 and they cut the lede. Gone was the goal of advancing digital intelligence. Now OpenAI would boldly build general-purpose artificial intelligence.

About 2018 - Elon tried to horn in and take over the whole company. He was politely asked to get lost. In a huff, Elon pulled an Elon and left the board and stopped paying for OpenAI’s ops.

You know, like the rich kid in the colony who is a shitty batsman but he has all the equipment and goes home with his bat and ball once he gets clean bowled on a full toss on the first ball. You know who I am talking about - we all know one. If you don’t know one, I have bad news for you - you are likely the Elon equivalent here.

Anyway, coming back to matters of importance - once Elon pulled an Elon, OpenAI needed money - and so Sam created OpenAI Global LLC - a capped profit company with Microsoft as a minority owner.

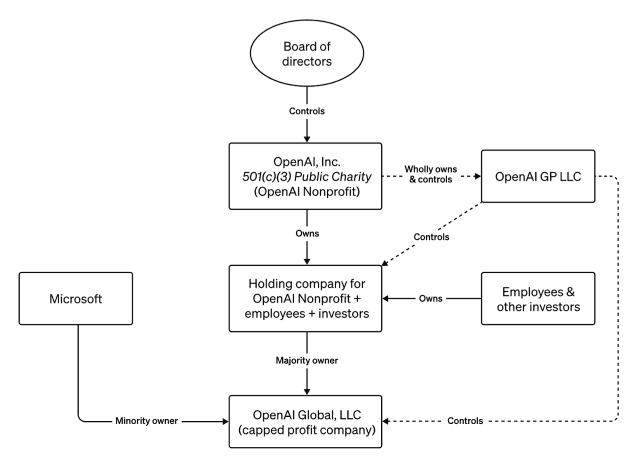

And so, this is what the ownership of OpenAI looks like:

So, OpenAI Global (OAG) could raise and make money, but still operated subordinated to the non-profit and its mission. To quote from their agreement2:

The Company exists to advance OpenAI, Inc.’s mission of ensuring that safe artificial general intelligence is developed and benefits all of humanity. The Company’s duty to this mission and the principles advanced in the OpenAI, Inc. Charter take precedence over any obligation to generate a profit. The Company may never make a profit, and the Company is under no obligation to do so. The Company is free to re-invest any or all of the Company’s cash flow into research and development activities and/or related expenses without any obligation to the Members.

So, is profit the dealbreaker here?

4. The Cash Cow called ChatGPT

ChatGPT was released towards the end of 2022. People (including I) collectively lost their shit. Today ChatGPT has over 100 million weekly users and over $1 bn in revenues. It is what anyone is talking about today when they talk about AI.

One of the best takes on this came from Ben Thompson who wrote:

“When it comes to meaningful consumer tech companies, the product is actually the most important. The key to consumer products is efficient customer acquisition, which means word-of-mouth and/or network effects; ChatGPT doesn’t really have the latter (yes, it gets feedback), but it has an astronomical amount of the former. Indeed, the product that ChatGPT’s emergence most reminds me of is Google: it simply was better than anything else on the market, which meant it didn’t matter that it came from a couple of university students (the origin stories are not dissimilar!). Moreover, just like Google — and in opposition to Zuckerberg’s obsession with hardware — ChatGPT is so good people find a way to use it. There isn’t even an app! And yet there is now, a mere four months in, a platform.”

The platform he was referring to was ChatGPT plugins. It was a compelling concept and worked well until it didn’t. Even I have waxed eloquent about how awesome it was. But it fell short. A few weeks ago, at OpenAI’s first Dev Day, they announced custom GPTs3. You can read the footnote for an explanation.

On the side, rumors flew thick and fast. Sam was building consumer companies. Sam was striking deals for chips and hardware. Sam was in cahoots with Nvidia. All apparently without board knowledge. Anyway, the larger point being that at some point, there was a straw that broke the proverbial camel’s back and pissed off Chief Scientist Ilya Sutskever no end. Or maybe the quora guy whose entire Poe business got rogered once custom GPTs were announced. Anyway, to quote the good folks at the Atlantic:

Altman’s dismissal by OpenAI’s board on Friday was the culmination of a power struggle between the company’s two ideological extremes—one group born from Silicon Valley techno optimism, energized by rapid commercialization; the other steeped in fears that AI represents an existential risk to humanity and must be controlled with extreme caution. For years, the two sides managed to coexist, with some bumps along the way.

This tenuous equilibrium broke one year ago almost to the day, according to current and former employees, thanks to the release of the very thing that brought OpenAI to global prominence: ChatGPT. From the outside, ChatGPT looked like one of the most successful product launches of all time. It grew faster than any other consumer app in history, and it seemed to single-handedly redefine how millions of people understood the threat — and promise — of automation. But it sent OpenAI in polar-opposite directions, widening and worsening the already present ideological rifts. ChatGPT supercharged the race to create products for profit as it simultaneously heaped unprecedented pressure on the company’s infrastructure and on the employees focused on assessing and mitigating the technology’s risks. This strained the already tense relationship between OpenAI’s factions — which Altman referred to, in a 2019 staff email, as “tribes.”

Maybe this humanity first stuff would have worked in Europe. But in the US of A, that shining cathedral to zero-sum capitalism, no chance.

5. Final Thoughts

Much rending of clothes and beating of chests have happened all over the interwebs - mostly about the thoughts of a board that would set $86bn of value on fire. These people miss the entire point. The charter of the board was not to preserve shareholder value. It was to shepherd a technology and prevent misuse. They were empowered to fire him. And fire him they did.

Microsoft has, to its tremendous short-term benefit, bet the house of a fair bit of its future on its OpenAI partnership. This goes beyond money, which Microsoft has plenty of (and much of which it hasn’t yet paid out or granted in terms of Azure credits).

OpenAI’s technology is built into a whole host of Microsoft’s products, from Windows to Office to ones most people have never heard of (I see you Dynamics CRM nerds!). Microsoft is also investing massively in infrastructure that is custom-built for OpenAI — Nadella has been touting the financial advantages of specialization — and has just released a custom chip that was tuned for running OpenAI models. That this level of commitment was made to an entity not motivated by profit, and thus un-beholden to Microsoft’s status as an investor and revenue driver, now seems absurd. Right?

Wrong:

What the last 7 days have made very clear is that Altman and Microsoft control the AI landscape for now. Microsoft has the cash, and soon the people it needs without being hobbled by OpenAI while it still remains partnered up with OpenAI.

What this means is that Microsoft and Azure are now much more interesting to enterprise customers while Google has a fair bit of catching up to do. That leaves Anthropic, which looked like a big winner 12 hours ago, and now feels increasingly screwed over as a standalone entity. The company has struck partnership deals with both Google and Amazon, but it is now facing a goliath of a competitor in Microsoft with effectively unlimited funds and GPU access; it’s hard not to escape the sense that it makes sense as a part of AWS.

Meanwhile, We have a new Lex Luthor contender.

Aside: An alternate history of OpenAI

Generated entirely by ChatGPT. I had nothing to do with the text here:

OpenAI was founded as a nonprofit with “with the goal of building safe and beneficial artificial general intelligence for the benefit of humanity.” But “it became increasingly clear that donations alone would not scale with the cost of computational power and talent required to push core research forward,” so OpenAI created a weird corporate structure, in which a “capped-profit” subsidiary would raise billions of dollars from investors (like Microsoft) by offering them a juicy (but capped!) return on their capital, but OpenAI’s nonprofit board of directors would ultimately control the organization. “The for-profit subsidiary is fully controlled by the OpenAI Nonprofit,” whose “principal beneficiary is humanity, not OpenAI investors.”

And this worked incredibly well: OpenAI raised money from investors and used it to build artificial general intelligence (AGI) in a safe and responsible way. The AGI that it built turned out to be astoundingly lucrative and scalable, meaning that, like so many other big technology companies before it, OpenAI soon became a gusher of cash with no need to raise any further outside capital ever again. At which point OpenAI’s nonprofit board looked around and said “hey we have been a bit too investor-friendly and not quite humanity-friendly enough; our VCs are rich but billions of people are still poor. So we’re gonna fire our entrepreneurial, commercial, venture-capitalist-type chief executive officer and really get back to our mission of helping humanity.” And Microsoft and OpenAI’s other investors complained, and the board just tapped the diagram — the first diagram — and said “hey, we control this whole thing, that’s the deal you agreed to.”

And the investors wailed and gnashed their teeth but it’s true, that is what they agreed to, and they had no legal recourse. And OpenAI’s new CEO, and its nonprofit board, cut them a check for their capped return and said “bye” and went back to running OpenAI for the benefit of humanity. It turned out that a benign, carefully governed artificial superintelligence is really good for humanity, and OpenAI quickly solved all of humanity’s problems and ushered in an age of peace and abundance in which nobody wanted for anything or needed any Microsoft products.

And capitalism came to an end.

Housekeeping:

As always, I look forward to hearing from you. If you liked this post, pls feel free to share this or subscribe to this newsletter using the links below. While I have been tardy of late, I try to write a 1000-2000 word essay once every 4 weeks or so.

Source paywalled, not bothering to link hence.

quote from the video:

GPTs are tailored version of ChatGPT for a specific purpose. You can build a GPT — a customized version of ChatGPT — for almost anything, with instructions, expanded knowledge, and actions, and then you can publish it for others to use. And because they combine instructions, expanded knowledge, and actions, they can be more helpful to you. They can work better in many contexts, and they can give you better control. They’ll make it easier for you accomplish all sorts of tasks or just have more fun, and you’ll be able to use them right within ChatGPT. You can, in effect, program a GPT, with language, just by talking to it. It’s easy to customize the behavior so that it fits what you want. This makes building them very accessible, and it gives agency to everyone.

We’re going to show you what GPTs are, how to use them, how to build them, and then we’re going to talk about how they’ll be distributed and discovered. And then after that, for developers, we’re going to show you how to build these agent-like experiences into your own apps.