Hello there!

How have you been.

Over the last few weeks I have been revisiting an old love - Coding. I wrote a twitter sentiment analysis engine using OpenAI. I had high hopes. Then I realized that the backtests were all over the place after 2017. Mostly because Twitter’s inexorable slide into the cesspool of the very basest of human expression (expressed 150 characters at a time) that it is today gained critical mass then. It is the literal definition of Garbage in, Garbage out.

Ah well, Now I am trying to make an engine that will assign weights to twitter accounts automatically based on linguistic analysis. It’s not perfect, but this new strategy is delivering about 90% on expected outcomes from this project. Another key issue is that companies are now using twitter influencers to pump and dump their stocks. This is an age old con but it wreaks havoc on any attempt to introduce a trust score.

One hopes.

Along my meandering journey into these woods, I found a great investment theme which seems to be hitherto underexplored. So, I thought this deep dive should be about AI/ML infra and what the lay of the land is to identify a few important themes. I will also point out to a few example companies to illustrate the point.

This is a technical write up so there will be a fair bit of jargon. I have tried to provide as many relevant links and footnotes as I can but please don’t be scared. Use google to find out more. This is a very exciting area.

A. Context

Think of anything that is done automatically for you and chances are there is some ML code behind it. Internet staples like personalized recommendations, banal items like image filters and productivity enhancements like autocomplete in gmail - all of these run on ML.

Here is what the typical deployment cycle for an AI/ML app looks like:

The stage from Mathematical model to Testing is the simple part - one that a sharp college undergrad can do on their laptop using R/Scala/Wolfram and if nothing else is available - MatLab. The real headaches come in the remaining two stages where the challenges of scaling, retraining and infrastructure management rear their ugly heads. Even the most cutting edge setups have serious issues in terms of scaling up their ML applications in production.

There are multiple reasons for this - among which are nascency and the fact that ML infra is not standardized. Unlike the off-the-shelf dynamic brought to other areas of Web2.0/3.0 via APIs, cloud infra and testing automation, most ML Infra seems to be in-house / purpose built and sophistication is the domain of those with the largest budgets to throw at it. However, there are way too many companies who don’t have the time, knowledge, or resources but want to have ML as a tool in their toolbox.

But to put that statement in perspective - here is a very nice visual description of exactly how much of a ML stack the ML code actually is:

That tiny little square in the middle - that’s the ML Code. Everything else is Infra - which is not standardized AT ALL. Of all the effort needed to put an ML model in production, more than 90% of the effort has nothing to do with ML. Ouch!

B. Four Themes around ML Infra

As I explored this and from my (admittedly miniscule) experience in building a scalable stack, I saw 4 themes that I feel are the key trajectories of value creation in this space. These are:

Improve Core Workflows - on the axes of Better, Faster, Cheaper.

Improving testing / retraining

Better Training Datasets

Automating the job of the ML engineer.

Now, neither is this a full list of all that could be done in this space, nor is this a MECE type breakdown. In fact, these categories overlap (a lot, sometimes). Think of this essay as a useful guide to map out the ecosystem and a way to slot any opportunity that comes your way into buckets.

Let’s Dive in.

1. Improve Core Workflows - on the axes of Better, Faster, Cheaper

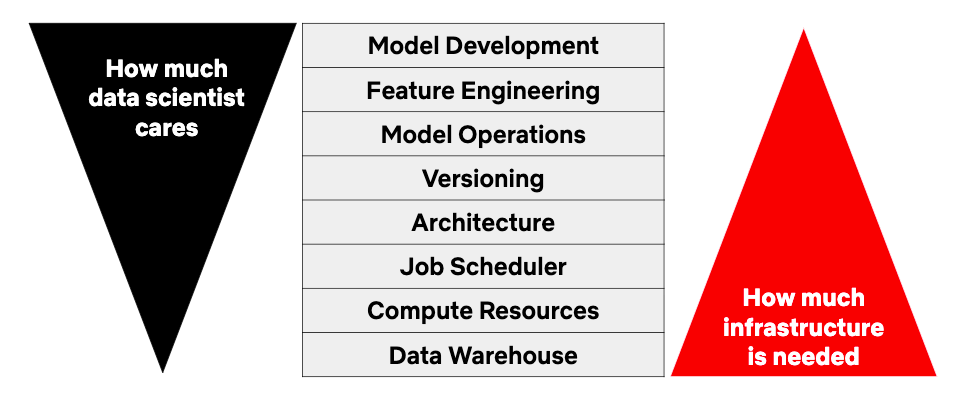

Let’s revisit a graphic from before -

What this shows is that 90% of the effort and infra needed to put an ML model into production has to do with things that an AI/ML engineer may not have any expertise in. Other challenges include the expense of training a model (in terms of compute, effort and time). Running a model to obtain it’s predictions (something called Inference) can also be extremely expensive. In fact, one of the holy grails is to achieve fast training with low latency (i.e. low cost) inference. From whatever I have gleaned so far, these are yet unsolved problems. Now, this presents a few major opportunities:

ML in a Box: if you are using a standard architecture model (e.g. BERT, YOLO) that doesn’t need a ton of customization, there are end-to-end API providers who will do a majority of the heavy lifting for you. As an example, Hugging Face offers an Automated NLP product. You upload the dataset and then it will automatically identify the best model, train it on your data and even deploy it for you.

Deployment Optimization: Suppose you don’t need another person’s model but only need help with compute / setup. No issues, there are folks available to help one out here as well. Mystic had a product that automatically found the fastest GPU for any job with different rates for “serverless” training & prediction (in-effect, auto-scaling compute). Neural Magic offers pre-trained “sparse” models and a way to sparsify existing models, so that models can be deployed on CPUs and not on extremely expensive GPUs.

Dev Tools for Custom Model Deployment: When more customized engineering is required (i.e. past the standard models), AI/ML engineers face a variety of challenges throughout the ML lifecycle. ML models can easily take 12-18 months to get deployment ready. The unmet need here is removing the need for ML engineers to be backend/infrastructure rockstars to be successful. Now, many open-source frameworks have emerged - offering significant productivity benefits by automating infra and standardizing common workflow steps. A great example of this is Metaflow. Here is an image from one of their papers on their view of the ML stack:

Source: here A great example is Outerbounds1, which provides a “full infrastructure stack for production-grade data science projects”. Other notables include AnyScale, which offers scalability & observability for Ray2 and OctoML which automatically optimizes any given model’s performance for different hardware stacks while keeping accuracy constant.

Devtools and Hardware for Specialized Models: Ok, so far, the solutions we have seen have to do with standardised or semi-standardized use cases. What if you are doing something really complex, like Robotics or spaceflight? As an example, consider the most common form of robot we may see - the self-driving car. Even today, self driving cars suck of tons and tons of real time data from sensors, LIDAR, GPS, and vehicle state. The time in which all these must be digested and outputs given is highly limited. Testing, debugging and deployment can be expensive and very difficult. Real-time ML deployment also comes with specific infrastructure challenges; online systems must have fast prediction capabilities as well as a pipeline for data stream processing. Enter companies like Applied Intuition and Foxglove that give simulation and debugging tools for these real time deployments. Another category is feature stores3 - and companies like Tekton and Featureform are doing a lot of the heavy lifting there. The last bucket has to do with highly customized hardware. Sometimes you just need a faster or a custom processor. It’s a low hanging but extremely expensive option to make inference faster / cheaper / better. Some of the more important names here are Graphcore, Luminous computing, Cerebras and Tensil.

Key challenges in this bucket have to do with the balance of self-serve v/s traditional account based sales models. The right balance of standardization vs flexibility is still evolving.

2. Improving testing / retraining

A key part of the ML lifecycle is testing, retraining and making models more reliable. This is especially relevant in the case of environments like Amazon or Netflix where the models are fine tunes on a per-customer basis - effectively having a separate model for each customer. The number adds up. Quickly.

Key requirements here are stress testing models before deployment, monitoring them in production, and automatically triggering retraining when necessary. Now, historically, there is no real way to standardize these actions but there are people trying. Here are three key buckets:

Active (or proactive) Stress Testing: The nascency of the AI/ML space means that unlike standard web / production applications, there are no real widely accepted best practices for stress-testing models. Companies in this space offer out-of-the-box tests or functionality to make user-specified edge case tests, ultimately providing more confidence that models are safe to deploy. However, the sales pipeline here has a significant challenge. The User - i.e. the ML engineer may understand the utility the best but may not have the necessary pull, the VP of engineering holds the purse strings but may not have enough appreciation of production challenges and utility for such products. It is unlikely to be an easy sale to make. Some of the important companies in this space are Efemerai and Kolena.

Custom Inference / Automatic Retraining: One of the most resource and cost intensive parts of the ML lifecycle is setting up inference and retraining steps. An important question is to determine when a custom inference pipeline should be favoured and the applicable conditions for retraining? People have views, but there is little consensus ( and that has to do a lot with the nature of these applications and secrecy around them - e.g. self driving cars, drones etc). For certain specialized users it is a five alarm fire. Key Startups here are Bytewax which offers ways to build custom inference pipelines including A/B testing and branching, and Gantry which sets up automatic retraining of models when it is beneficial to do so.

3. Better Training Datasets

Accuracy of a ML model is heavily dependent on the accuracy of data it is trained on and the amount of data that it is trained on. Labelling ( i.e. categorizing and sorting data) is extremely challenging - which has led to emergence of solution providers like Scale. Yet another strategy is to create synthetic data (example) or “unlocking” otherwise sensitive data with a variety of techniques.

Synthetic Training Data: To achieve high accuracy levels on complex ML use cases, the industry standard is combining data verified by humans with data generated automatically. A great example of this is from self driving cars, where cos like Waymo utilize real world data many times over by applying transformations. As an example, many industrial robotics companies use a combination of simulation and real world scenarios for training / testing. Some are particularly focused on verticals, like SBX Robotics or Parallel Domain (computer vision), while others offer data annotation and management services like Scale. Gaming focused companies like Unreal Engine or Unity also provide tools for synthetic data generation.

“Unlocking” Real Data: Most real-world data is frequently unavailable to third parties due to regulations, commercial sensitives or simple confidentiality concerns. Such data can be unlocked using techniques like anonymization, differential privacy, federated learning, and homomorphic encryption. However, some of the most relevant and useful data sets are completely locked off - e.g. healthcare and finance - and are completely unavailable to even start doing basic analytics except for in-house use cases. Important companies here are Tonic AI, TripleBlind, LeapYear, Zama, Owkin, Apheris, Devron, Privacy Dynamics.

4. Automating the job of the ML Engineer

Remember the Matrix? Programs writing programs. The concept was a little too mind blowing for me.

Now, in the age where AI is emerging rapidly, DO I need to be a full fledged AI/ML developer to address a use case where I want some ML features? Take my twitter sentiment analysis engine as an example. The first iteration took me only 6 days to write. Many companies in this category are platforms that democratize access to ML. However, the question of how technical a user has to be and the generality of the set of solved use cases is yet unanswered.

Semi-custom models for Specific Verticals: Companies like Continual offer ML Solutions to data analysts in areas like churn prediction, conversion rate optimization, or forecasting - through an end-to-end solution that integrates the data stack. A little farther away on the spectrum are Mage and Tangram which target product developers at early-stage startups. Seriously insane shit. Other companies offer vertical specific solutions like Taktile, targeting finance. Other Platforms offer models for a variety of end users and use cases e.g. Dataiku and Datarobot. Some other interesting companies in this bucket are Pecan, Corsali, Obviously AI, Mage, Noogata and Telepath.

Robotic Process Automation: Robotic process automation (RPA) has exploded of late, a type of “no code” automation tool. Many large use cases for RPA are replacing routine human tasks that don’t require machine learning. However, as more and more of such work is automated, it is increasingly clearer how much larger, additional amount of automation cutting-edge ML can enable. Relatively more mature players like UiPath and Automation Anywhere favouring horizontal capabilities, while relative upstarts like Instabase, Olive AI, and Klarity have vertical-specific offerings in high interest areas like finance, health, and accounting. Also, the UX of setting up these types of workflows can frequently require many specialized “RPA developers” and RPA consulting firms which is a clear opportunity for incumbents and newer players to succeed as system integration partners.

C. Conclusion

I hope this post was a useful deep dive and a useful way to create a map of the space and help you evaluate any opportunities that may come your way. The opportunities are significant and there are enough technical & go-to-market challenges for ML infrastructure startups. Once again - uncertainty creating profit opportunities.

D. Asides

Here is an excerpt from the exchange filings of Panchsheel Organics Limited. I wish to find out more - like how do you get names of investors wrong. In what world is Murli Manohar Lahoti something you mistake with Jyoti Vikas Kasat?

Housekeeping

As always, I look forward to hearing from you. If you liked this post, pls feel free to share this or subscribe to this newsletter using the links below. I try to write a 1000-2000 word essay once every two weeks or so.

Which is an early-stage commercialization of the Metaflow project (spun out of Netflix). Their focus is on data scientists being able to experiment with new ideas in production quickly even if the models are data-hungry & require integration with existing business systems.

Ray is an API for distributed applications with libraries for accelerating machine learning workloads

In machine learning and pattern recognition, a feature is an individual measurable property or characteristic of a phenomenon. Like Vatsal has a big nose. It’s my feature. Think of a feature as an important variable for a system. You first have to figure out which ones are important and then figure out how to use them in your ML stack. My nose is not an important feature for most applications.